The Agent in My Pocket: Replacing $300/mo Subscriptions with a 40 TOPS Raspberry Pi

Khalil Adib

Senior AI Engineer

Lately, my workflow has been 90% AI-driven. From Cursor and Claude Code to Codex, I've been leaning on the "big three"—Claude 4.5 Opus, GPT-5.2, and Gemini 3—to handle the heavy lifting. The speed is addictive, but the bill is untenable.

When you're coding with agents, the "Cloud Tax" is real. Every message you send forces the agent to re-read your entire context window. Input/output tokens accumulate like interest on a bad loan, and eventually, you're paying hundreds of dollars a month just to talk to your own code.

I decided it was time to move the "Brain" back home. I wanted a Pocket Agent: completely offline, zero subscription, and 100% private.

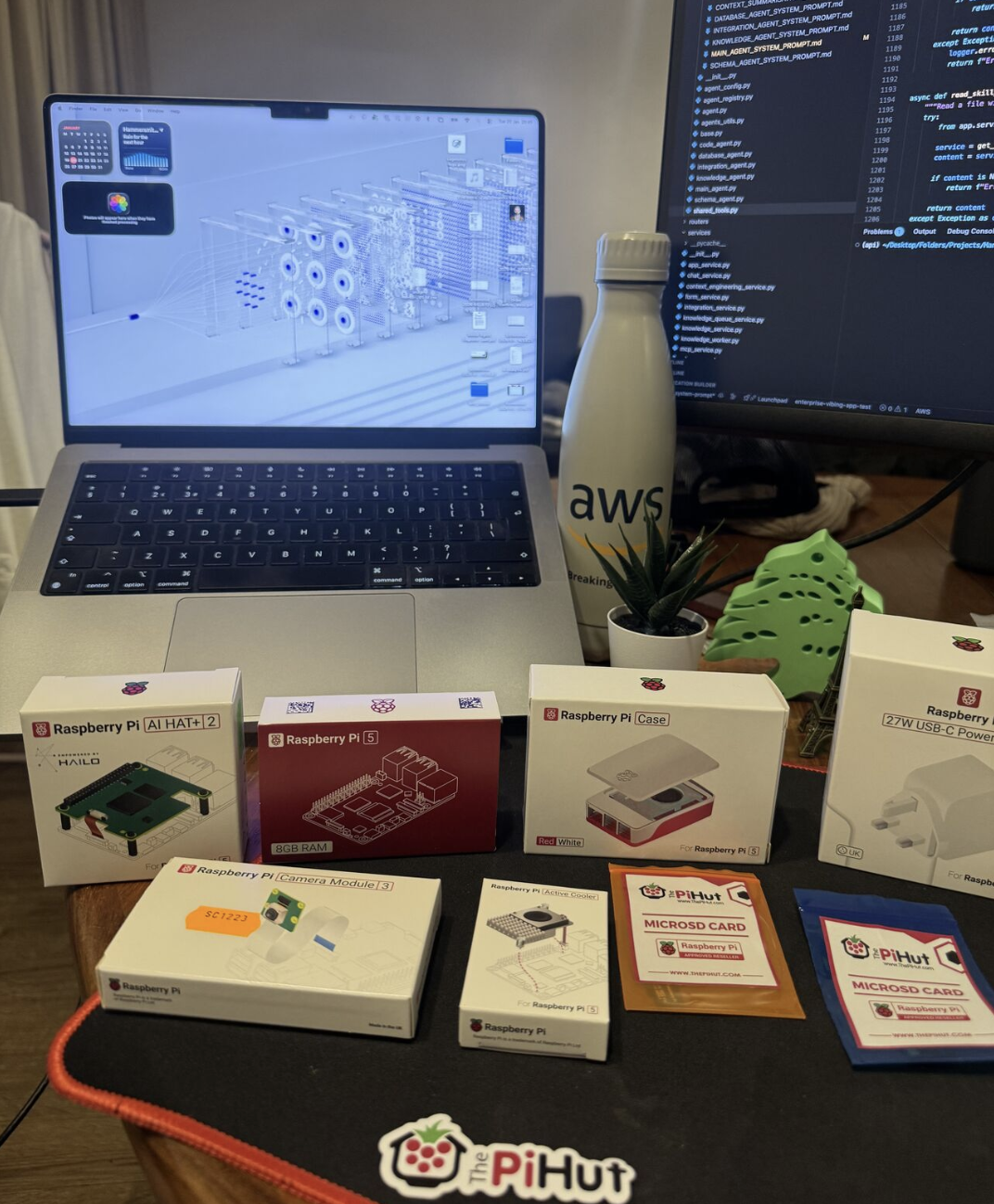

The Hardware: Putting a Supercomputer on a Credit Card

When you think "Edge AI," you think Raspberry Pi. But running an LLM on a standard Pi 5 CPU is like trying to win a Formula 1 race in a golf cart—it's just not built for it.

Then Raspberry Pi dropped the AI HAT+ 2.

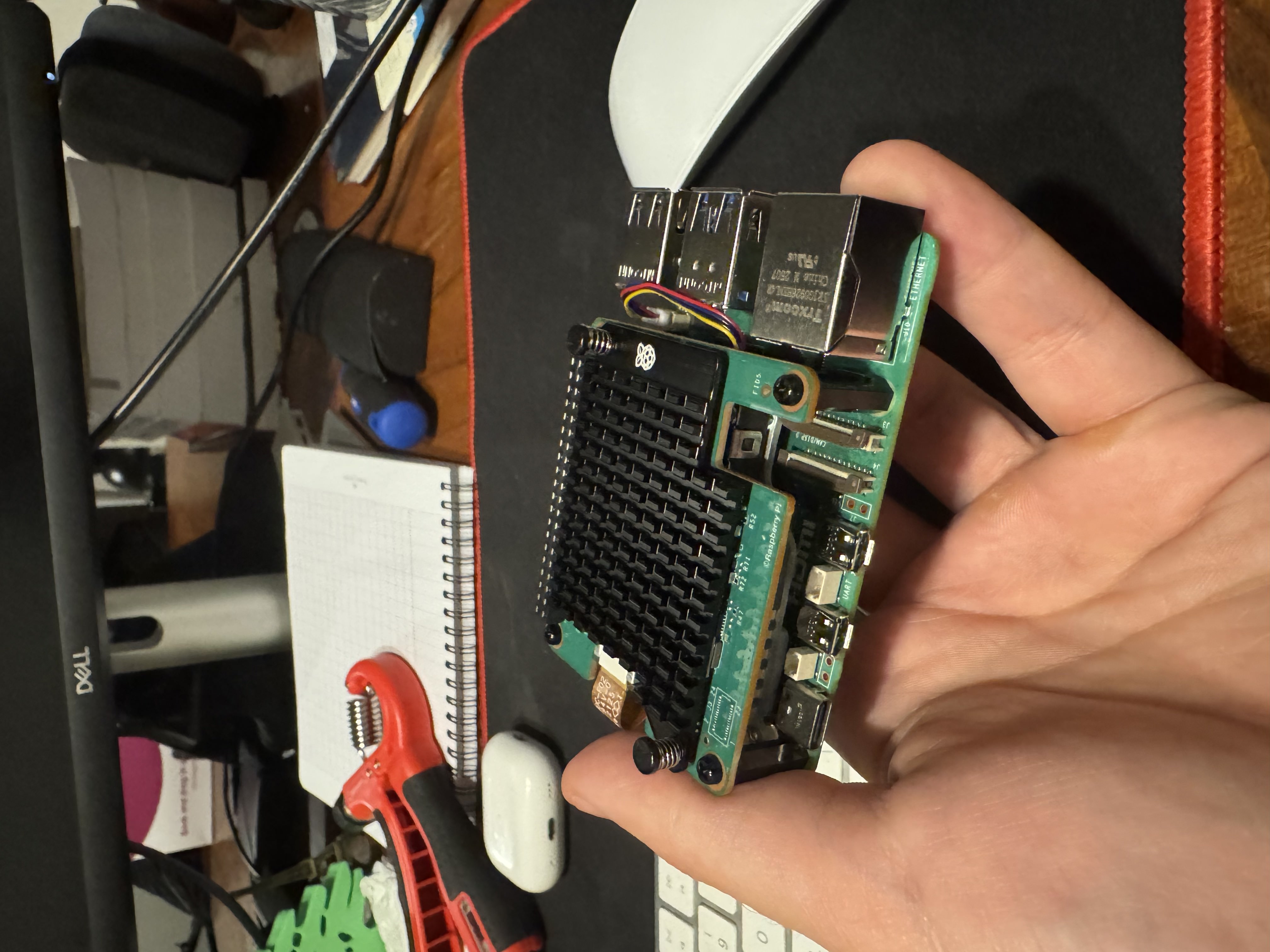

This isn't just a minor update; it's a total architectural shift. While the previous generation was great at "seeing" (Object detection), the AI HAT+ 2 is built for Generative AI. It features the Hailo-10H chip, delivering a massive 40 TOPS (Tera-Operations Per Second) of power.

But the real "mic drop" feature? 8GB of dedicated LPDDR4X RAM. ### Why the HAT+ 2 is the GOAT for Local AI:

- Memory Isolation: The LLM lives on the HAT's 8GB of RAM. The Pi 5's memory stays 100% free for the OS and your apps.

- INT4 Optimization: Most small models are quantized to 4-bit. This HAT is specifically engineered to crunch those numbers at lightning speed.

- Thermal Intelligence: It comes with its own monster heatsink because "thinking" this hard gets hot.

| Feature | The "Old" AI HAT+ | The New AI HAT+ 2 |

|---|---|---|

| NPU Chip | Hailo-8 (Vision-Focused) | Hailo-10H (GenAI-Focused) |

| Power | 26 TOPS | 40 TOPS |

| Onboard RAM | None (Uses Pi RAM) | 8GB Dedicated |

| LLM Support | Struggles / CPU-heavy | Native & Fluid |

The "Silent Genius" Performance

I assembled my build with a Pi 5 Starter Kit, the AI HAT+ 2, and a Camera Module 3 (for future vision tasks). Total cost? Roughly the same as a few months of high-end API usage.

I ran a battery of distilled models, and the results were incredible. We aren't just getting text; we are getting reading-speed streams.

| Model | TTFT (First Token) | Tokens Per Second (TPS) |

|---|---|---|

| DeepSeek-R1-Distill-1.5B | 0.7s | 46.5 |

| Qwen2.5-Instruct-1.5B | 0.5s | 58.0 |

| Llama 3.2-1B-Instruct | 0.5s | 58.5 |

The result? A 1.5B model on this HAT feels as snappy as a cloud-based GPT-4o. It's responsive, intelligent, and most importantly, it's mine.

The "Model Zoo" Challenge

Let's be real for a second: you can't just drag and drop a .gguf file from HuggingFace and expect the NPU to pick it up. If you do that, the Pi CPU will try to run it and likely melt.

To get that 40 TOPS performance, the model must be compiled into a Hailo Executable Format (HEF). While the Hailo Model Zoo is growing fast with ready-to-use models, there is a learning curve if you want to bring your own custom weights. But for those of us who like to tinker, this is where the fun begins.

Phase 2: Goodbye Alexa, Hello "Pi-Jarvis"

Right now, my agent is a text-based genius. But a "Pocket Agent" needs to hear and speak.

My next goal is to bypass the screen entirely. I'm currently sourcing a high-gain microphone and a compact speaker to integrate:

- Whisper (STT): Running on the Hailo-10H for instant speech recognition.

- Piper (TTS): To give my local agent a voice that doesn't sound like a robot from 1995.

I'm building a world where I can walk into my office, ask my Pi to "analyze the latest git commit," and have it explain the logic to me while I make coffee—all with zero bytes sent to a corporate server.

The era of the "Cloud Tax" is over. The Pocket Agent has arrived.

What should I do next?

- Would you like me to write a "Quick Start" guide with the terminal commands to get your first model running on the HAT?

- I can also help you design the prompt for your "Agent" to make it specifically helpful for the coding tasks you mentioned (Cursor/Claude style)!